Recently, I was working with a client’s systems administration team, and they were agonizing over timing a new application upgrade. They knew the upgrade was going to take hours (we’re talking Windows here, not Unix, sigh!), and did not want to interrupt business operations. They planned on a midnight upgrade, which would necessitate overtime, and require project managers, QA stage, and others to work overnight as well.

“Wait, you’re on the cloud, right? Take advantage of cloud infrastructure and do your upgrade with zero downtime,” I remarked. Well, apparently this was novel to them, so I outlined a couple approaches my teams have taken in the past. Just thought I’d share them with you all too 🙂

The Swap

I also call this the Sleight-of-Hand. The approach is simple – take a clone of the existing server, swap it for the production server, perform upgrades on the now offline server, bring it back online and swap it back from the clone, destroy the clone.

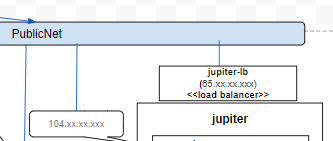

A key ingredient for this to work is to always front your servers with a load balancer, and make sure all services, DNS names, etc., “point” to the load balancer instead of the server itself. Load balancers are typically used server clusters, of course, but always include a load balancer in your architecture even if you have single-server endpoints.

Your cloud or virtualization environment will have server image, “snapshot”, or “clone” capabilities. You should already be taking advantage of that as part of disaster recovery/business continuity plans (not as a backup per se, but working in conjunction with backups). So, when you’re ready for the system upgrade, create a clone server from a recent snapshot, connect that server to the load-balancer, and disconnect your production server. Now you’re ready for upgrading the server.

Perform all upgrades on the server, test (testers can point their DNS entries directly to the server instead of load balancer), reconnect to the load balancer, and disconnect the clone. Through this whole process, no services are interrupted.

There’s one other factor to consider in this swap process: persistent objects. That is, usually files (database objects, well, that requires an additional discussion, we’ll save that for later). If you have a multi-clone server architecture you’ll already have something in place for syncing files between your clones, but single-server sys admins and developers may not be familiar with them. Well, there’s a low key way to manage. First, identify all places where your system generates files. A smart strategy here is to make sure all apps use a centrally-organized location for such files – a “/data” folder, for example, or a separate drive.

You may or may not want to include log entries in such a location – if you care about keeping a complete running log, then yes, but there are cases where you really don’t care about what was logged on the clone server during the short period it was live, so maybe you don’t centralize log entries (additional consideration: compliance requirements – PCI data security requirements may necessitate keeping a complete running log of all error or security events, for example). Anyway, as a systems administrator, work with the development team to identify all persistent files, document best practices for file organization, and go from there.

Now that you know where all central files are organized (in a /data folder and several subfolders, yes?!). It’s easy to sync up the clone server with the production server – use tools like rsync or xcopy to copy all new and updated files from the clone server to the (now online) production server.

The Leapfrog

The Leapfrog is a useful approach when you’re also upgrading the underlying hardware along with the system upgrades. Virtualization environments have made it easier to do in-place hardware component upgrades (additional CPUs, more RAM, etc.), but there are still cases where a completely new server is required.

So, you build the new server out, install and do all your system upgrades and deployments on the new server, bring it online, connect it to the load balancer, disconnect the old server. Just leapfrog over that old stone 🙂

There’s lots more to consider when doing a system leapfrog. First, how is your configuration management?! You should be tracking all configuration changes, dependent library installs, and patches on your existing server. When building a new server without a clone, you’ll typically be base operating system images and applications, so that must be followed through with all the patches, upgrades, and such that you’ve already made on the old server.

Some patches and configuration changes, though, may not be needed on the new OS (for example), so don’t just reapply them blindly. Review system documentation and patches to see what’s applicable and what’s not, and check them off accordingly. Your configuration management documents will help you keep track. You will want to keep a parallel configuration management document for the new server, and review with your team any variations between the 2 servers. This is a good time to obsolete some libraries, if you can ascertain they are not needed in the new system.

Here’s a good time to take stock of the system differences between your new and old server – is your list changes significant? Perhaps your Dev team shrugs “yeah, no problem, go ahead and upgrade the platform”, but after your configuration management comparison you may discover what’s a minor deal to them is more major at the systems level. If the configuration management delta is signicant, then you’re not talking about a Leapfrog, but a Major System Change.

The Leapfrog works well when the underlying system changes are minor – new hardwire, faster drives, additional RAM, etc. Significant OS or library changes, though – well, that’s going to require significant regression testing, likely code changes from the dev team, etc. It’s an Epic event, so plan for it accordingly.

If you’re still finding Leapfrog is your approach, complete the upgrade, then apply all data file backups from the old server to your new server. One difference though – unlike the Swap, I do not recommend restoring backups of log files to the new server. It is a new server, it should remain pristine as such. If log files are needed for compliance reasons, they should be backed up and archived according to your compliance management processes. After your data restore is complete, connect the new server to the load balancer and disconnect the old server. Create an archival image of the server, then say adieu to it.

Well, that’s it at a high level, some zero-downtime system deployments to consider, the Swap and the Leapfrog. Have you experiences similar patterns?